The Market Has an AI Value Problem

The market does not have an AI access problem anymore. It has an AI value problem.

Recently, enterprise conversations around AI have started to sound very familiar. The tools are everywhere. The demos are polished. The expectations are high. And yet, when leadership teams get past the excitement and ask a simple question, the room usually gets quiet. Is this actually saving us money, improving outcomes, or creating durable business value?

That is the real issue underneath a lot of today’s AI adoption stories. The technology has moved fast. Organizational maturity has not.

The latest numbers make that gap hard to ignore. Nearly 89% of organizations have adopted AI tools in some form, but only 23% can accurately measure return on investment[1]. At the same time, global AI spending is approaching $200 billion, while 95% of enterprise AI pilots still fail to deliver meaningful business impact [1][2]. Those numbers should force a more disciplined conversation. The goal here is not to ask whether AI matters. It clearly does. The goal is to ask why so many organizations are still struggling to turn adoption into outcomes.

Candidly, this is where a lot of AI strategy breaks down.

Adoption Is Not the Same as ROI

For the individual user, AI can feel immediately useful. A marketer drafts faster. A seller summarizes meetings faster. A consultant structures a proposal faster. A developer can prototype faster. These are real gains, and they matter. But at the enterprise level, personal efficiency does not automatically become team performance, and team performance does not automatically become financial value. That is the productivity paradox sitting at the center of the current market[2].

We are seeing a divergence between individual capability and organizational readiness. The average employee may already have a practical relationship with AI. The company around them may still be operating without shared workflows, clear governance, consistent measurement, or any meaningful definition of success. In that environment, AI becomes a collection of disconnected habits instead of a scalable business capability.

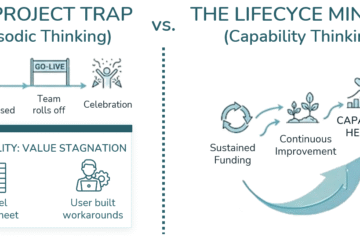

This is one reason the market feels stuck in what many people now call pilot purgatory. Companies launch experiments. They test copilots. They run workshops. They generate enthusiasm. But they do not operationalize the change. The tool exists, but the system around it does not. Without workflow redesign, role clarity, and outcome tracking, the pilot becomes another isolated initiative that never translates into margin improvement, revenue lift, or better execution[2].

That is also why “time saved” is such a weak measurement on its own.

Time saved sounds persuasive because it is simple. It gives executives a quick headline and vendors a clean narrative. But time saved is not the same thing as money made. If AI helps someone draft a document twenty minutes faster, what happens next? Does that time reduce labor cost? Does it speed up a revenue-generating process? Does it improve proposal quality, shorten sales cycles, increase account growth, or help a team produce better decisions? If the answer is unclear, then the organization is not measuring value. It is measuring activity[4].

The better question is whether core workflows are improving. That is where the more credible benchmark data gets interesting. In organizations with strong adoption, sales teams have seen proposal cycle time improve by 22% quarter over quarter. Customer service teams have reduced average handle time by 11% while improving customer satisfaction. Finance teams have shortened the close cycle by two days, and HR teams have reduced time-to-post by 25%[3].

Those are not soft benefits. Those are operational outcomes tied to business performance.

The Modern AI Worker Is Not Just a Better Prompter

This is where the idea of the modern AI-powered worker becomes useful, but only if it is defined carefully.

Whether or not that exact label becomes the one the market keeps, the underlying concept matters. This is not just a person who knows how to prompt well. It is not a power user chasing isolated productivity gains. The modern AI worker is someone operating inside a system that can absorb, govern, and compound AI-driven work.

That means moving from operators to curators of outcomes.

An operator uses AI to complete isolated tasks. A curator of outcomes uses AI inside a governed, repeatable workflow where human judgment, verification, and business context still matter. The operator focuses on output volume. The curator focuses on decision quality, execution clarity, and business fit.

This shift is important because AI often removes or reduces the reversible grind of work. It can summarize, draft, synthesize, and organize. But once that work is accelerated, the remaining human responsibility becomes more valuable, not less. Teams still need people to frame the problem correctly, interpret context, challenge bad outputs, validate assumptions, and connect work to operational goals. In other words, AI does not eliminate the need for judgment. It raises the importance of judgment.

Microsoft’s Maturity Is Starting to Change the Equation

This is also where Microsoft’s positioning deserves more attention.

The most compelling version of the Microsoft story is not that it has simply added AI features to an existing software stack. It is that the Microsoft ecosystem is becoming AI-infused at the core. That matters because it changes the role AI can play. Instead of sitting off to the side as a bolt-on assistant, AI starts to work inside the places people already operate: email, meetings, collaboration threads, business applications, workflow systems, and enterprise data.

That is a much stronger foundation for enterprise value.

One of the more useful ways to think about this is through the emerging Microsoft framing around Work IQ, Fabric IQ, and Foundry IQ. The language may evolve, but the value point is clear. Work IQ is about understanding how people actually work, not just how a process is documented. Fabric IQ is about grounding intelligence in the business’s underlying data and economic reality. Foundry IQ is where those signals are fused into operational understanding that can actually be acted on. From there, agents stop behaving like blind scripts and start acting with context, supervision, and business-aware prioritization.

That is the connection point to the modern AI worker.

If AI can understand how work really happens, where judgment repeatedly intervenes, where process friction shows up, and how business outcomes are affected, then the enterprise moves beyond simple assistance. It starts building an operating model that learns. That is materially different from bolting generative AI onto disconnected tools and hoping usage alone will create value.

It also sharpens Kumo’s role in this conversation. The opportunity is not to sell AI as magic. It is to help organizations reduce execution friction inside environments they already depend on. That can mean aligning Copilot and Power Platform to actual workflows, shaping the data landscape so better signals are available, clarifying where governance has to come first, and helping leadership validate outcomes in terms they can actually trust.

A More Mature Roadmap to AI Value

The most useful AI roadmap is usually not a giant transformation announcement. It is a disciplined progression.

- Audit actual behavior. Measure adoption by role, workflow, and business function. Identify where people are already finding value and where shadow usage is filling a gap.

- Define outcome metrics before scaling. Track business indicators leadership already understands, such as proposal cycle time, service throughput, time to close, accuracy, or margin improvement.

- Embed AI into the work itself. If usage lives outside the systems people already rely on, adoption becomes noisy and fragile. If it lives inside the flow of work, the signal gets stronger.

- Build maturity in layers. Start with governed experimentation, then workflow integration, then higher-trust automation. Not every organization is ready for autonomous agents on day one.

That maturity model matters because enterprise AI is not a switch. It is a progression. The organizations that get real value are usually not the ones doing the most experimentation. They are the ones connecting experimentation to structure, management routines, and measurable business outcomes[1][3].

At the same time, a lot of the market still has a shadow AI problem as much as it has an innovation problem. Research shows that 73.8% of ChatGPT usage at work happens through non-corporate accounts, while sensitive data leakage into these environments has increased dramatically[2]. That should reframe the conversation. When employees are trying to solve real work problems with ungoverned tools, that is not just a compliance issue. It is also a signal that the organization has not provided a practical, trusted alternative.

Where the Winners Separate Themselves

At the end of the day, this is the real challenge for enterprise AI in 2026.

The market has already proven there is demand. It has already proven there is experimentation. It has already proven there is individual utility. What it has not proven consistently is enterprise discipline.

That is the gap between hype and intrinsic value.

If an organization wants AI to matter, it has to stop asking whether employees like the tool and start asking whether the business is getting better at executing. It has to stop relying on assumptions about time saved and start building measurement around real operational outcomes. It has to stop treating pilots as proof and start treating them as inputs into a broader adoption strategy.

The modern AI-powered worker is not simply someone who uses AI often. It is someone working inside a structure where AI supports clarity, execution, and measurable outcomes. And the modern enterprise is not the one with the most licenses. It is the one with the maturity to connect adoption to business value.

To recap, the winners in this market will not be the organizations with the loudest AI story. They will be the ones that replace vague enthusiasm with grounded execution, replace isolated pilots with operationalized workflows, and replace vibe-based spending with outcome-linked analytics.

That is where ROI starts to become real.

Sources

- The State of Enterprise AI in 2025: From Experimentation to Intrinsic Value. Larridin. Accessed February 26, 2026.

- The AI Productivity Paradox: Why Employees Are Moving Faster Than Enterprises. Kore.ai. Accessed February 26, 2026.

- Maximizing ROI and Adoption in Microsoft Copilot Programs. Correlation One. Accessed February 26, 2026.

- Enterprises Are Still Deciding if Microsoft 365 Copilot Is Worth It. SAMexpert. Accessed February 26, 2026.

- Why Microsoft Copilot Adoption Is Lagging: The ROI Dilemma. Petri. Accessed February 26, 2026.

0 Comments